AI / Legal-tech

AI contract intelligence on AWS

Fargate, Lambda, and multi-tenant delivery in six weeks.

6 weeks

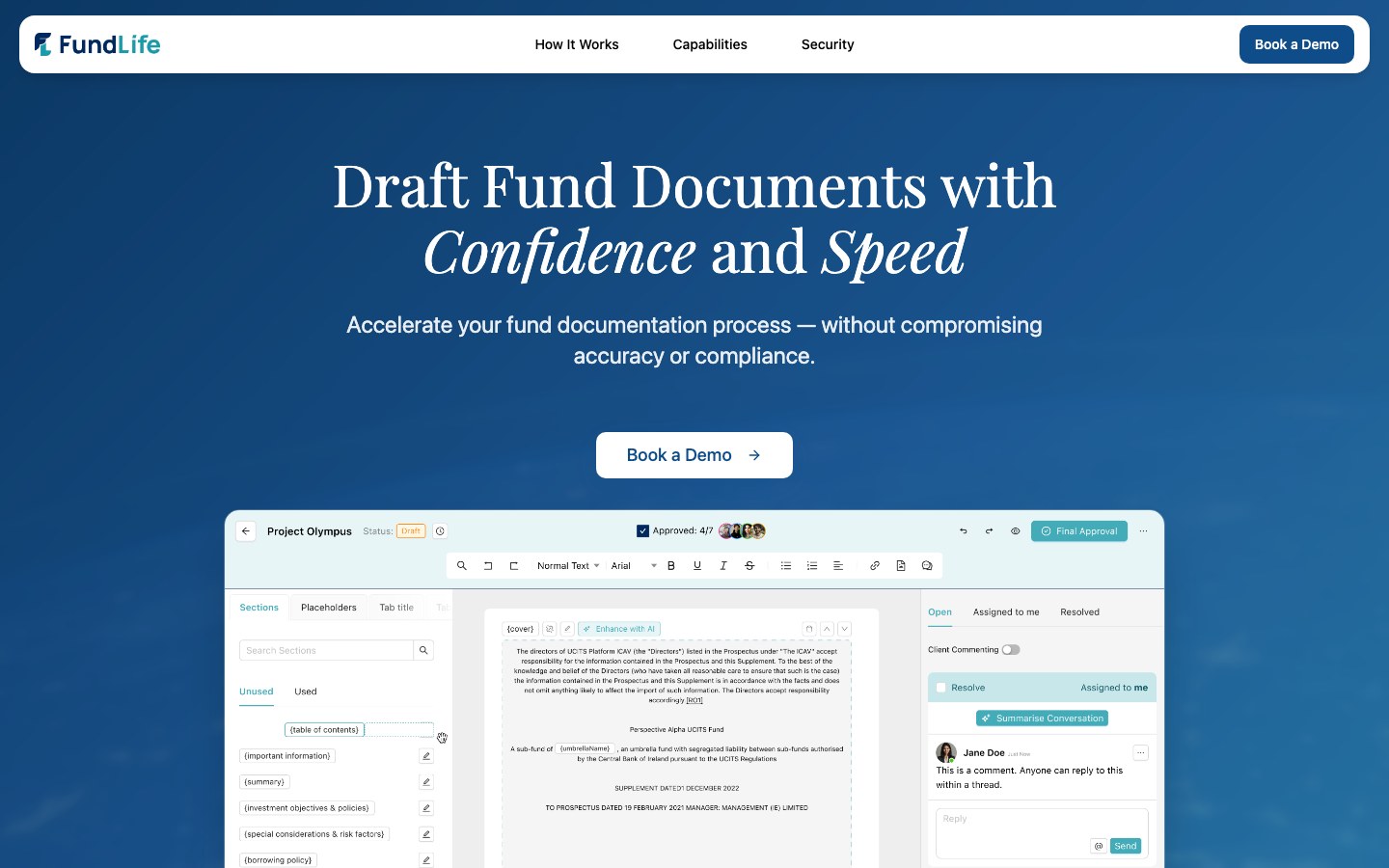

StationOps built and deployed the AWS infrastructure for Fundlife.ai’s AI-powered contract intelligence platform — application stack, AI/ML endpoints, multi-tenant data isolation, and CI/CD — in six weeks.

Engagement

6 weeks, infrastructure delivery

Team

Cloud architect, Platform engineer, DevOps lead

Focus areas

Application infra, AI/ML deployment, multi-tenancy, CI/CD

4

AI endpoints deployed as Lambda functions behind API Gateway

3

Services live on ECS Fargate with auto-deploy CI/CD

Multi

Tenant-isolated architecture with per-tenant subdomains and S3 buckets

6 wks

From first commit to production across app, API, and AI stack

Situation

Fundlife.ai is building an AI-powered contract intelligence platform that helps fund administrators draft, review, and analyse legal documents. The product team (Tribes Agency) had built the application — a Next.js frontend and Django backend — but needed AWS infrastructure to get it into production.

| Component | Stack | Specification |

|---|---|---|

| Frontend | Next.js | Containerised on ECS Fargate, custom domain (app.dev.fundlife.ai). |

| Backend | Django / Python | ECS Fargate, RDS PostgreSQL, tenant-isolated schemas via subdomain routing. |

| AI / ML | OpenAI + T5 transformer | Lambda functions behind API Gateway for text generation, conflict detection, entity recognition, and thesis extraction. |

| Platform | — | Multi-tenant S3 buckets (fundlife-{tenant}), GitHub Actions CI/CD, custom domains with ACM certs. |

What we did

01 Deployed the application stack

Stood up an ECS Fargate cluster in eu-west-1 with separate services for the Next.js frontend and Django backend, RDS PostgreSQL for the database, ECR repositories for container images, and ALBs with ACM certificates handling HTTPS. Configured custom domains (app.dev.fundlife.ai, api.dev.fundlife.ai) and resolved environment-specific issues including frontend build config and CORS settings.

02 Built the AI/ML endpoint layer

Refactored the data science team’s Python notebooks into deployable Lambda functions and stood up API Gateway at ai.dev.fundlife.ai with four endpoints: text generation (clause improvement and recommendations), conflict detection, entity recognition, and investment thesis extraction. Configured OpenAI API key management and S3 integration for tenant-specific training data.

03 Enabled multi-tenant architecture

Configured wildcard subdomain routing so each tenant gets a dedicated subdomain ({tenant}.api.dev.fundlife.ai) that maps to the same backend service, which uses the subdomain to route requests to the correct database schema. Created per-tenant S3 buckets (fundlife-{tenant}) for document and AI model data isolation.

04 Wired CI/CD from GitHub

Built GitHub Actions pipelines that auto-build Docker images and push to ECR on merge to the dev branch. ECS services pick up the new image tag and roll out automatically — zero-touch deployments for the product team.

Fundlife.ai — delivery summary

The stack spans eu-west-1: ECS Fargate for Next.js and Django, RDS PostgreSQL with tenant schemas, API Gateway and Lambda for AI workloads (OpenAI + T5), wildcard subdomains, per-tenant S3 buckets, Route 53 and ACM, and GitHub Actions to ECR for zero-touch ECS rollout.

Below is the full published narrative: workload view, what we built, deliverables, timeline, impact and ROI, and customer quote.

Deliverables

- ECS Fargate application cluster — frontend and backend services on Fargate with ALBs, ACM certs, and custom domains in eu-west-1.

- RDS PostgreSQL database — managed database instance with tenant-isolated schemas and automated backups.

- AI Lambda endpoints — four Lambda functions behind API Gateway at ai.dev.fundlife.ai: text generation, conflict detection, entity recognition, thesis extraction.

- Multi-tenant infrastructure — wildcard subdomain routing, per-tenant S3 buckets, and schema-level data isolation.

- CI/CD pipeline — GitHub Actions to ECR to ECS auto-deploy on merge to dev branch.

- Domain & certificate management — fundlife.ai domain migrated to Route 53 with ACM certificates for all subdomains.

Technologies & architecture (at a glance)

AWS ECS Fargate, Application Load Balancers, Amazon ECR, Amazon RDS (PostgreSQL), AWS Lambda, Amazon API Gateway, Amazon S3, AWS Certificate Manager, Amazon Route 53, GitHub Actions CI/CD — plus OpenAI and T5-based inference on serverless compute.

Timeline

Six weeks from initial deploy to full production stack including AI endpoints. Scope expanded during the engagement as the product team added AI features and multi-tenant requirements.

Week 1 Initial application deploy

ECS cluster, ECR repos, RDS PostgreSQL, and ALB provisioned. Frontend and backend services live on temporary domains ahead of custom domain cutover.

Weeks 2–3 CI/CD, domains & environment config

GitHub Actions pipelines wired, fundlife.ai domain migrated, custom subdomains configured, CORS and environment variable issues resolved.

Weeks 4–5 AI/ML layer & multi-tenancy

Lambda functions deployed behind API Gateway, notebook code refactored, wildcard subdomain routing and per-tenant S3 buckets configured.

Week 6 Testing, hardening & handoff

End-to-end testing across all endpoints, security review, and operational handoff to the product team.

Impact & ROI

Fundlife.ai went from application code on localhost to a production platform they could demo to clients in six weeks. Without StationOps, the product team would have needed to hire a DevOps engineer, learn AWS, and piece together the infrastructure themselves — burning months before a single client could see the product.

The comparison below shows what this would typically look like as an internal effort.

| Dimension | Typical internal, manual build | With StationOps engagement |

|---|---|---|

| Timeline | 3–5 months to stand up ECS, RDS, CI/CD, API Gateway, Lambda, and multi-tenant infrastructure. | 6 weeks — full stack including AI endpoints, multi-tenancy, and CI/CD. |

| Engineering effort | 3–6 person-months (≈ 480–960 hours) of DevOps/platform engineering the product team didn’t have. | Product team stayed focused on features — first client demo happened during the engagement. |

| Fully-loaded cost | Roughly €45k–€100k in hiring and engineering time, before the cost of delayed revenue. | Engagement unblocked client demos and revenue conversations months earlier than the internal path. |

Figures shown are typical ranges for comparable work and will vary by baseline maturity, constraints, and team size.

- Product team unblocked — Tribes Agency could develop and demo features against real infrastructure instead of localhost — enabling the first client demo within weeks.

- AI features in production — four AI endpoints live and callable from the frontend, with the T5 model and OpenAI integration running on serverless compute.

- Tenant-ready from day one — multi-tenant data isolation baked into the architecture: new tenants onboarded by creating a subdomain and S3 bucket.

- Zero-touch deployments — product engineers merge to dev and changes go live automatically across the full stack.

“StationOps took our application code and gave us a production platform we could demo to clients. The AI endpoints, multi-tenancy, and CI/CD pipeline all just worked.”

Need your platform in production?

We build production-grade AWS infrastructure for product teams so you can ship features — not wrestle with cloud plumbing.

Related case studies

Assiduous

How StationOps delivered a six-account Control Tower Landing Zone, SLO-based operations, and ongoing managed AWS for an AI-enabled corporate finance platform — in weeks instead of months.

Auth.inc

How StationOps delivered a production multi-region AWS adtech platform — ECS, EKS, Aurora, MSK, CloudFormation, and CD from Azure Pipelines — in twelve weeks.

DigiPro

How StationOps helped DigiPro cut incidents, speed up safe releases, and reclaim engineering time — with SLOs, observability, CI/CD guardrails, and cost visibility in twelve weeks.

Flexiwage

How StationOps improved payroll pipeline availability, automated compliance evidence, cut MTTR and cloud spend, and doubled safe deploy frequency for Flexiwage in fourteen weeks.